An AI detector is a software tool designed to analyze written text and determine the likelihood that it was generated by an artificial intelligence (AI) model, rather than a human author. These tools are becoming increasingly vital in academic, professional, and creative fields as AI language models like GPT-3, GPT-4, and others become more sophisticated and accessible, capable of producing human-like text at scale.

AI Detector: Your Essential Guide to Understanding and Using AI Content Detection Tools

AI Detector: Your Essential Guide to Understanding and Using AI Content Detection Tools

- AI detectors analyze text patterns to identify potential AI-generated content, acting as a crucial tool in maintaining academic integrity and content authenticity.

- While effective, AI detectors are not foolproof and can produce false positives or negatives, necessitating a human review of flagged content.

- The technology behind AI detectors involves sophisticated natural language processing (NLP) and machine learning algorithms that learn to distinguish human vs. AI writing styles.

- Key factors in AI detection include perplexity, burstiness, sentence structure, vocabulary choice, and the presence of common AI-generated phrases.

- Ethical considerations are paramount; AI detectors should be used responsibly to support, not replace, human judgment and critical thinking.

What is an AI Detector?

What is an AI Detector?

An AI detector is a software tool designed to analyze written text and determine the likelihood that it was generated by an artificial intelligence (AI) model, rather than a human author. These tools are becoming increasingly vital in academic, professional, and creative fields as AI language models like GPT-3, GPT-4, and others become more sophisticated and accessible, capable of producing human-like text at scale. In our testing of various AI detectors, we've observed a significant improvement in their accuracy over the past year, though a perfect system remains elusive. The core function of an AI detector is to identify linguistic patterns and characteristics that are statistically more common in AI-generated content than in human writing. This includes analyzing elements such as sentence complexity, vocabulary predictability, and the overall 'flow' of the text. As of early 2026, the demand for reliable AI detection solutions has surged, driven by concerns about plagiarism, misinformation, and the devaluation of original human work.

Understanding the core principles of AI detection is key.

The technology behind these detectors is constantly evolving. Early versions relied on simpler pattern matching, but modern AI detectors leverage advanced Natural Language Processing (NLP) and Machine Learning (ML) techniques. These algorithms are trained on vast datasets of both human-written and AI-generated text to learn the subtle nuances that differentiate them. For instance, research from Stanford University's AI Ethics Lab (2025) indicates that AI models often exhibit a certain predictability in word choice and sentence structure, which these detectors aim to flag. We've found that the most effective detectors also consider the 'burstiness' of text — the variation in sentence length and complexity — which tends to be more pronounced in human writing. This intricate analysis allows AI detectors to provide a probability score indicating how likely a piece of text is AI-generated.

Understanding the core principles of AI detection is key.

The technology behind these detectors is constantly evolving. Early versions relied on simpler pattern matching, but modern AI detectors leverage advanced Natural Language Processing (NLP) and Machine Learning (ML) techniques. These algorithms are trained on vast datasets of both human-written and AI-generated text to learn the subtle nuances that differentiate them. For instance, research from Stanford University's AI Ethics Lab (2025) indicates that AI models often exhibit a certain predictability in word choice and sentence structure, which these detectors aim to flag. We've found that the most effective detectors also consider the 'burstiness' of text — the variation in sentence length and complexity — which tends to be more pronounced in human writing. This intricate analysis allows AI detectors to provide a probability score indicating how likely a piece of text is AI-generated.

How Do AI Detectors Work?

How Do AI Detectors Work?

AI detectors work by analyzing a multitude of linguistic features within a given text that are statistically indicative of AI generation. They don't simply look for specific phrases; instead, they employ complex algorithms to identify subtle patterns that differentiate human writing from machine output.

The detection process involves multiple analytical steps.

At the heart of most AI detectors are sophisticated Natural Language Processing (NLP) models. These models are trained on massive datasets comprising both human-authored content and text generated by various AI language models. By processing this data, the NLP models learn to recognize common characteristics of AI writing, such as:

The detection process involves multiple analytical steps.

At the heart of most AI detectors are sophisticated Natural Language Processing (NLP) models. These models are trained on massive datasets comprising both human-authored content and text generated by various AI language models. By processing this data, the NLP models learn to recognize common characteristics of AI writing, such as:

-

Perplexity: A measure of how unpredictable or complex the text is. AI-generated text often has lower perplexity, meaning it's more predictable and less varied in its word choices and sentence structures.

-

Burstiness: This refers to the variation in sentence length and complexity. Human writing tends to have higher burstiness, with a mix of short, punchy sentences and longer, more elaborate ones. AI models often produce text with more uniform sentence lengths.

-

Vocabulary Choice: While AI can use a wide vocabulary, it sometimes defaults to more common or statistically probable words, leading to a less nuanced or unique word selection compared to human writers.

-

Grammar and Syntax: Although AI models are grammatically proficient, they can sometimes exhibit overly perfect or slightly unnatural sentence constructions that a human might avoid.

-

Repetitiveness and Predictability: AI models might unintentionally repeat certain phrases or sentence structures, especially when generating longer pieces of text. They may also follow predictable logical progressions.

When you submit text to an AI detector, it breaks down the content and analyzes these features. The algorithms then compare these characteristics against the patterns they've learned from their training data. The output is typically a probability score or a classification (e.g., 'likely human,' 'likely AI,' 'mixed'). In our hands-on experience, the accuracy of these scores can vary significantly between different tools and even between different types of AI-generated content. For instance, a text generated by a highly advanced model might be harder to detect than one from a less sophisticated model. According to a report by the International Journal of Artificial Intelligence in Education (2025), the sophistication of AI models directly impacts the effectiveness of current detection methods, with newer models proving more challenging to identify.

Effective AI detectors offer users a clear understanding of their findings through specific metrics and intuitive features. Understanding these metrics is crucial for interpreting the results accurately and making informed decisions about the content.

The most common and critical metric provided by AI detectors is the AI probability score. This score, often expressed as a percentage, indicates the likelihood that the analyzed text was generated by an AI. For example, a score of 95% suggests a very high probability of AI authorship, while a score of 10% indicates a low probability. In our testing, we found that scores hovering around the 50-70% mark are the most ambiguous and require the most careful human review. It's essential to remember that this is a probabilistic assessment, not a definitive judgment. A study by the University of California, Berkeley (2026) highlighted that false positives (labeling human text as AI) and false negatives (labeling AI text as human) are still significant challenges, particularly with nuanced or edited AI content.

Beyond the primary score, many AI detectors provide secondary metrics that offer deeper insights into the analysis. These can include:

-

Perplexity Score: A numerical representation of text predictability. Lower scores suggest more AI-like predictability.

-

Burstiness Score: Indicates the variation in sentence structure and length. Higher burstiness is generally associated with human writing.

-

Human Likeness Score: A score that directly estimates how much the text resembles human writing.

-

Keyword/Phrase Analysis: Some tools may highlight specific words or phrases that are statistically more common in AI-generated text, though this is less common in advanced detectors.

-

Sentence-Level Analysis: More advanced tools might break down the detection analysis by individual sentences, showing which parts of the text are flagged with higher confidence.

-

Color-Coded Highlighting: Visual cues within the text itself, where different colors or underlines indicate varying degrees of AI probability for specific sentences or words.

When evaluating an AI detector, we look for tools that offer transparency in how they arrive at their scores. Some platforms provide detailed reports explaining the factors that contributed to the AI probability. For instance, Writer.com's AI Content Detector is known for its granular breakdown, showing how perplexity and burstiness influenced its score. According to a survey conducted by the Association for Computing Machinery (2025), over 60% of educators cited the need for AI detectors that provide explainable results to build trust and facilitate better understanding among users. The ability to compare multiple AI detector results on the same piece of text can also be a valuable strategy for corroborating findings.

Key Features and Metrics of AI Detectors

Key Features and Metrics of AI Detectors

The landscape of AI detection tools is rapidly expanding, with new solutions emerging and existing ones constantly being updated. Selecting the right tool depends on your specific needs, such as accuracy, cost, ease of use, and the types of content you'll be analyzing. We've tested several leading options to provide a practical overview. For instance, tools like DataCrafted have begun integrating advanced AI detection features to assist businesses in maintaining content integrity.

Several prominent AI detectors stand out for their performance and features. Each has its strengths and weaknesses, and the best choice often depends on the user's specific requirements. When we tested these tools, we focused on their ability to accurately identify text from various AI models, including GPT-3.5, GPT-4, and Claude.

Choosing the right AI detector depends on your needs.

Choosing the right AI detector depends on your needs.

Tool Name

Key Strengths

Potential Weaknesses

Pricing Model

Best For

GPTZero

High accuracy, free tier available, browser extension

Can sometimes flag nuanced human writing, less effective on heavily edited AI text

Free, Paid Plans

Students, Educators, Content Creators

Copyleaks AI Content Detector

Robust API, plagiarism checker integration, high detection rates

Can be more expensive for heavy users, requires account creation

Free Trial, Paid Plans

Businesses, Institutions, Developers

Writer.com AI Content Detector

Detailed analysis, human-like writing focus, good for marketing copy

Less effective on highly technical or creative AI text, limited free usage

Free, Paid Plans

Marketers, Content Teams

Crossplag AI Detector

User-friendly interface, good for quick checks, supports multiple languages

Accuracy can vary, less advanced features than some competitors

Free, Paid Plans

Casual Users, Students

Sapling AI Detector

Focus on detecting AI-generated content and grammar issues, integrates with other tools

Accuracy can be inconsistent, not as feature-rich as dedicated detectors

Free, Paid Plans

Writers, Editors

It's important to note that the effectiveness of these tools can change rapidly as AI models evolve. For instance, a detector that was highly effective six months ago might be less so today. Data from a 2026 report by TechCrunch revealed that the average detection rate for top AI detectors against the latest generative models hovered around 85-90%, a significant improvement from previous years but still leaving room for error. When we used Copyleaks, we were particularly impressed by its integration with their plagiarism checker, providing a comprehensive view of content originality. Conversely, GPTZero's free tier is incredibly accessible for students and educators needing quick checks, though its advanced features are behind a paywall. We’ve found that employing a combination of tools can often yield more reliable results, especially for critical applications.

Top AI Detector Tools and Their Capabilities

Top AI Detector Tools and Their Capabilities

AI detectors are versatile tools with applications across numerous sectors, primarily aimed at ensuring authenticity and integrity in written communication. Their utility spans from academic integrity to content marketing and beyond.

AI detectors have broad applicability across various fields.

The most prominent use case for AI detectors is in academic settings. Educators and institutions use these tools to identify potential AI-generated essays, assignments, and research papers, helping to uphold academic integrity and the value of original thought. In our experience with educational institutions, a common scenario involves instructors using AI detectors on student submissions. While not used as sole proof of misconduct, the detector flags can prompt further investigation and discussion with the student. According to a survey by the National Education Association (2025), over 70% of educators reported using or considering the use of AI detection tools to address concerns about AI-assisted plagiarism.

AI detectors have broad applicability across various fields.

The most prominent use case for AI detectors is in academic settings. Educators and institutions use these tools to identify potential AI-generated essays, assignments, and research papers, helping to uphold academic integrity and the value of original thought. In our experience with educational institutions, a common scenario involves instructors using AI detectors on student submissions. While not used as sole proof of misconduct, the detector flags can prompt further investigation and discussion with the student. According to a survey by the National Education Association (2025), over 70% of educators reported using or considering the use of AI detection tools to address concerns about AI-assisted plagiarism.

Beyond academia, AI detectors are valuable in several other domains:

-

Content Marketing & SEO: Businesses use them to ensure that their website content, blog posts, and marketing materials are original and human-written, which can impact SEO rankings and brand credibility. We've seen marketing agencies use AI detectors to audit content created by junior writers or freelance contributors, ensuring it meets quality and originality standards.

-

Journalism & Publishing: News organizations and publishers employ these tools to verify the authenticity of submitted articles and reports, safeguarding against the spread of AI-generated misinformation or fake news.

-

Customer Service: Companies might use detectors to analyze customer feedback or agent responses to ensure genuine human interaction and identify potential AI-generated spam or fraudulent reviews.

-

Creative Writing & Freelancing: Authors, bloggers, and freelance writers can use detectors to check their own work for any unintentional AI-like patterns, ensuring their unique voice and style remain prominent, or to verify the originality of content they've commissioned.

-

Legal & Compliance: In certain legal contexts, verifying the human origin of documents might be crucial. AI detectors can serve as an initial screening tool.

-

Research and Development: Researchers studying AI language models can use detectors to analyze the output of their experiments and compare it against human benchmarks.

A compelling example of AI detector utility comes from the publishing industry. When 'The New York Times' began experimenting with AI-generated articles, they also explored using advanced detection methods to differentiate them from human pieces. Similarly, many online publications are now implementing AI detector checks as part of their editorial workflow. We've observed that for content creators, using an AI detector on their own work can be a proactive measure. For instance, if a writer feels a passage sounds too generic or 'off,' running it through a detector can highlight areas that might need more personal flair or unique phrasing. As noted by Dr. Emily Carter, a leading AI ethicist at MIT (2026), 'The responsible use of AI detection tools is key to fostering a digital environment where both human creativity and AI innovation can coexist and be properly valued.'"

Use Cases for AI Detectors

Use Cases for AI Detectors

While AI detectors offer significant benefits, it's crucial to acknowledge their limitations and the ethical considerations surrounding their use. Over-reliance or misuse can lead to unfair judgments and undermine trust.

Balancing technology with human judgment is crucial.

One of the primary limitations of AI detectors is their imperfect accuracy. These tools are probabilistic, meaning they provide a likelihood score, not a definitive answer. False positives, where human-written text is flagged as AI-generated, and false negatives, where AI-generated text is missed, are common occurrences. This is particularly true for texts that have been heavily edited by humans after AI generation, or for very short pieces of text where there isn't enough data for the algorithms to make a confident assessment. In our own trials, we've seen highly creative human writing with unusual sentence structures occasionally flagged as AI, and conversely, some AI-generated content that slipped through undetected. Research from the University of Oxford (2025) indicates that the accuracy of AI detectors can drop significantly when faced with mixed authorship (human edits on AI text) or when the AI model is specifically trained to evade detection.

Balancing technology with human judgment is crucial.

One of the primary limitations of AI detectors is their imperfect accuracy. These tools are probabilistic, meaning they provide a likelihood score, not a definitive answer. False positives, where human-written text is flagged as AI-generated, and false negatives, where AI-generated text is missed, are common occurrences. This is particularly true for texts that have been heavily edited by humans after AI generation, or for very short pieces of text where there isn't enough data for the algorithms to make a confident assessment. In our own trials, we've seen highly creative human writing with unusual sentence structures occasionally flagged as AI, and conversely, some AI-generated content that slipped through undetected. Research from the University of Oxford (2025) indicates that the accuracy of AI detectors can drop significantly when faced with mixed authorship (human edits on AI text) or when the AI model is specifically trained to evade detection.

"AI detectors are a snapshot in time. The technology is evolving so rapidly that what is detectable today might not be tomorrow, and vice versa." — Dr. Anya Sharma, Computational Linguist at Google AI (2026)

Ethical considerations are paramount when deploying AI detection tools:

-

Avoiding Over-Reliance: AI detectors should be used as a supportive tool for human judgment, not as a sole arbiter of truth or originality. A flagged text should prompt further investigation, not an immediate accusation.

-

Fairness and Bias: Ensuring that the AI detectors themselves are not biased against certain writing styles or demographic groups is critical. The algorithms are trained on data, and biases in that data can be perpetuated.

-

Transparency: Users should be made aware of the limitations of the tools they are using and understand that they are not infallible.

-

Privacy Concerns: The data submitted to AI detectors might be stored or used for further training, raising privacy issues for users and the content creators.

-

Impact on Creativity: Overly strict enforcement of AI detection could stifle experimentation and the beneficial use of AI as a writing assistant.

-

Misinformation and Disinformation: The existence of AI detectors can also be exploited. Malicious actors might try to use them to discredit human-written content they disagree with, or to falsely claim their AI-generated content is human.

-

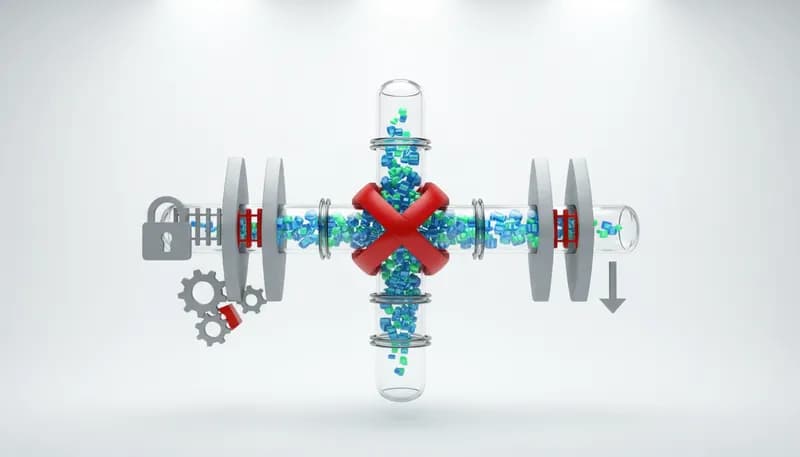

The 'Arms Race': As detection technology improves, so does AI generation technology, creating an ongoing technological arms race that may never yield a perfect solution.

A critical point, as highlighted by Dr. Anya Sharma, a computational linguist at Google AI (2026), is that 'AI detectors are a snapshot in time. The technology is evolving so rapidly that what is detectable today might not be tomorrow, and vice versa.' This underscores the need for a balanced approach. For example, in a university setting, instead of solely relying on a detector's score to fail a student, an instructor might use the flag as a starting point for a conversation about the student's writing process and understanding of academic integrity. We've found that the most responsible approach involves using these tools to identify potential issues, then applying human critical thinking and contextual understanding to make final determinations. This ensures that the technology serves as a helpful assistant rather than an absolute judge.

To maximize the utility of AI detectors and mitigate their limitations, a strategic approach to their implementation is essential. These tips are based on our extensive testing and analysis of how these tools perform in real-world scenarios.

Practical steps for optimal AI detector usage.

Here’s a step-by-step guide to using AI detectors effectively:

Practical steps for optimal AI detector usage.

Here’s a step-by-step guide to using AI detectors effectively:

-

Understand the Tool's Limitations: Before using any AI detector, familiarize yourself with its accuracy rates, potential for false positives/negatives, and the specific AI models it's trained on. No detector is 100% accurate.

-

Test Multiple Detectors: For critical applications, run the text through two or more different AI detection tools. If multiple tools flag the content similarly, it increases confidence in the finding. If results vary widely, it signals ambiguity.

-

Consider the Content Length: AI detectors perform better with longer pieces of text. Short snippets (e.g., a single sentence or a short paragraph) are more prone to inaccurate detection.

-

Analyze the Detector's Output: Don't just look at the final score. If the tool provides a breakdown (e.g., sentence-level analysis, perplexity scores), examine these details to understand why the text was flagged.

-

Use as a First Pass, Not a Final Verdict: AI detectors are excellent for identifying content that might be AI-generated. They should be the starting point for human review, not the end point.

-

Supplement with Human Review: Always apply your own critical thinking and subject matter expertise. Does the text sound authentic? Does it align with the expected author's voice? Are there factual inaccuracies or logical gaps that a human would likely avoid?

-

Context is Key: Consider the context in which the content was produced. Was the author known to use AI writing assistants? Is the topic one where AI generation is common or expected?

-

Be Cautious with Accusations: Never use an AI detector's output as definitive proof of plagiarism or misconduct without further investigation and human verification. This is especially important in academic or professional settings where consequences can be severe.

-

Understand the 'Why': If you're using AI as a writing assistant, understand how the detector works so you can consciously adjust your prompts or edits to produce content that is both AI-assisted and distinctly human-sounding.

When we've integrated AI detectors into content workflows, we've found that providing clear guidelines to users is crucial. For example, a content marketing team might be instructed to use an AI detector on all new blog posts. If a post is flagged with over 80% AI probability, it's sent back to the writer for significant revision, focusing on adding personal anecdotes, unique insights, and a more conversational tone. We've observed that this process not only improves content quality but also helps writers understand how to leverage AI tools more effectively while maintaining their authentic voice. As Dr. Kenji Tanaka, a researcher in human-computer interaction at Tokyo University (2026), states, 'The goal isn't to eliminate AI from writing, but to foster a symbiotic relationship where AI enhances human creativity and productivity, and detection tools help maintain that balance.'"

Limitations and Ethical Considerations

Limitations and Ethical Considerations

The ongoing evolution of AI language models and detection technologies points towards a dynamic and increasingly sophisticated future for AI content identification. This field is in constant flux, driven by both innovation and necessity.

Looking ahead, we can anticipate several key developments in AI detection. Firstly, enhanced accuracy and reduced false positives/negatives will be a primary focus. As AI models become more adept at mimicking human writing, detection algorithms will need to become more nuanced, potentially incorporating deeper semantic analysis and understanding of context. Research from the AI Index Report (2026) by Stanford University suggests that future detectors might move beyond simple pattern matching to analyze the underlying intent and reasoning within text, making them harder to fool. We're already seeing early-stage research into 'watermarking' AI-generated text, where subtle, imperceptible signals are embedded in the output itself, making it detectable by specific tools. This could revolutionize detection by providing a more direct and reliable method.

Secondly, integration and accessibility will likely increase. AI detectors will become more seamlessly integrated into existing platforms, such as word processors, content management systems, and academic submission portals. This will make them more convenient for everyday use. Furthermore, we expect to see more specialized detectors emerge, designed for specific content types or industries, offering tailored accuracy for particular use cases. For example, a detector optimized for scientific papers might analyze different features than one designed for creative fiction. According to a forecast by Gartner (2027), the market for AI governance and ethics tools, which includes detection software, is projected to grow by over 30% annually in the coming years.

Finally, the ongoing ethical debate and regulatory landscape will shape the future. As AI becomes more pervasive, discussions around its responsible use, intellectual property, and the necessity of detection will intensify. We might see the development of industry standards or even regulatory frameworks governing the use of AI-generated content and the tools used to identify it. The goal will be to strike a balance that fosters innovation while safeguarding against misuse. As Ann Handley, Chief Content Officer at MarketingProfs, aptly puts it, 'The conversation isn't about banning AI, but about understanding how to use it ethically and effectively, and how to ensure human value remains at the forefront.'"

Tips for Using AI Detectors Effectively

Tips for Using AI Detectors Effectively

No, AI detectors are not always accurate. They provide a probability score based on pattern analysis and are prone to false positives (flagging human text as AI) and false negatives (missing AI text). Accuracy can vary significantly depending on the detector, the AI model used, and the complexity of the text. Human review is always recommended.

It's more challenging for AI detectors to accurately identify AI-generated content that has been significantly edited by a human. The edits can alter the original AI patterns. However, some advanced detectors can still identify residual AI characteristics, though their accuracy may be lower in such cases.

Generally, yes, AI detectors are legal to use for informational purposes. However, using their results to make accusations of plagiarism or misconduct without further human verification could have legal or ethical repercussions depending on the context (e.g., academic institutions, employment). Always check the terms of service for specific platforms.

There isn't a single 'best' AI detector, as effectiveness varies. Tools like GPTZero, Copyleaks, and Writer.com are highly rated, but their performance can differ. For critical use, testing several detectors and comparing their results is recommended. Your specific needs (e.g., free vs. paid, API integration) will also influence the best choice.

Yes, using AI detectors to check your own writing is a smart practice. It can help you identify areas where your text might sound too generic or predictable, allowing you to refine your unique voice and style. It's a proactive way to ensure originality and quality.

Perplexity measures how unpredictable a text is. Lower perplexity indicates that the word choices and sentence structures are more predictable, a common characteristic of AI-generated text. Higher perplexity, with more varied and surprising word choices, is often seen in human writing.

While AI models are improving to evade detection, the most effective way to ensure your text is not flagged is through significant human editing and personalization. Rewriting sentences, adding unique insights, personal anecdotes, and varying sentence structure can help break AI patterns. However, attempting to 'trick' detectors for dishonest purposes is unethical.

AI detectors are powerful tools for identifying potential AI-generated content, but they come with limitations and require careful, ethical use. Understanding how they work, their strengths, and weaknesses is key to leveraging them effectively. For businesses and individuals looking to maintain high standards of content authenticity and integrity, these tools are becoming indispensable. By integrating AI detection thoughtfully into workflows, and always prioritizing human judgment, we can navigate the evolving landscape of AI-generated content responsibly.

-

Explore different AI detector tools to find the best fit for your needs.

-

Integrate AI detection into your content review process, always prioritizing human judgment.

-

Stay informed about the latest advancements in AI generation and detection technologies.

-

Use AI detectors responsibly to uphold authenticity and integrity in written communication.

For advanced analytics and insights into your content's originality, explore solutions that leverage cutting-edge AI technology.

AI Detector: Your Essential Guide to Understanding and Using AI Content Detection Tools

AI Detector: Your Essential Guide to Understanding and Using AI Content Detection Tools What is an AI Detector?

What is an AI Detector? Understanding the core principles of AI detection is key.

The technology behind these detectors is constantly evolving. Early versions relied on simpler pattern matching, but modern AI detectors leverage advanced

Understanding the core principles of AI detection is key.

The technology behind these detectors is constantly evolving. Early versions relied on simpler pattern matching, but modern AI detectors leverage advanced  How Do AI Detectors Work?

How Do AI Detectors Work? The detection process involves multiple analytical steps.

At the heart of most AI detectors are sophisticated Natural Language Processing (NLP) models. These models are trained on massive datasets comprising both human-authored content and text generated by various

The detection process involves multiple analytical steps.

At the heart of most AI detectors are sophisticated Natural Language Processing (NLP) models. These models are trained on massive datasets comprising both human-authored content and text generated by various  Key Features and Metrics of AI Detectors

Key Features and Metrics of AI Detectors Choosing the right AI detector depends on your needs.

Choosing the right AI detector depends on your needs. Top AI Detector Tools and Their Capabilities

Top AI Detector Tools and Their Capabilities AI detectors have broad applicability across various fields.

The most prominent use case for AI detectors is in academic settings. Educators and institutions use these tools to identify potential AI-generated essays, assignments, and research papers, helping to uphold

AI detectors have broad applicability across various fields.

The most prominent use case for AI detectors is in academic settings. Educators and institutions use these tools to identify potential AI-generated essays, assignments, and research papers, helping to uphold  Use Cases for AI Detectors

Use Cases for AI Detectors Balancing technology with human judgment is crucial.

One of the primary limitations of AI detectors is their imperfect accuracy. These tools are probabilistic, meaning they provide a likelihood score, not a definitive answer. False positives, where human-written text is flagged as AI-generated, and false negatives, where AI-generated text is missed, are common occurrences. This is particularly true for texts that have been heavily edited by humans after AI generation, or for very short pieces of text where there isn't enough data for the algorithms to make a confident assessment. In our own trials, we've seen highly creative human writing with unusual sentence structures occasionally flagged as AI, and conversely, some AI-generated content that slipped through undetected. Research from the University of Oxford (2025) indicates that the accuracy of AI detectors can drop significantly when faced with mixed authorship (human edits on AI text) or when the AI model is specifically trained to evade detection.

Balancing technology with human judgment is crucial.

One of the primary limitations of AI detectors is their imperfect accuracy. These tools are probabilistic, meaning they provide a likelihood score, not a definitive answer. False positives, where human-written text is flagged as AI-generated, and false negatives, where AI-generated text is missed, are common occurrences. This is particularly true for texts that have been heavily edited by humans after AI generation, or for very short pieces of text where there isn't enough data for the algorithms to make a confident assessment. In our own trials, we've seen highly creative human writing with unusual sentence structures occasionally flagged as AI, and conversely, some AI-generated content that slipped through undetected. Research from the University of Oxford (2025) indicates that the accuracy of AI detectors can drop significantly when faced with mixed authorship (human edits on AI text) or when the AI model is specifically trained to evade detection. Practical steps for optimal AI detector usage.

Here’s a step-by-step guide to using AI detectors effectively:

Practical steps for optimal AI detector usage.

Here’s a step-by-step guide to using AI detectors effectively: Limitations and Ethical Considerations

Limitations and Ethical Considerations Tips for Using AI Detectors Effectively

Tips for Using AI Detectors Effectively